New Generative NeRF Inpainting Technique Revolutionizes 3D and 4D Visual Content Creation

July 19, 2024

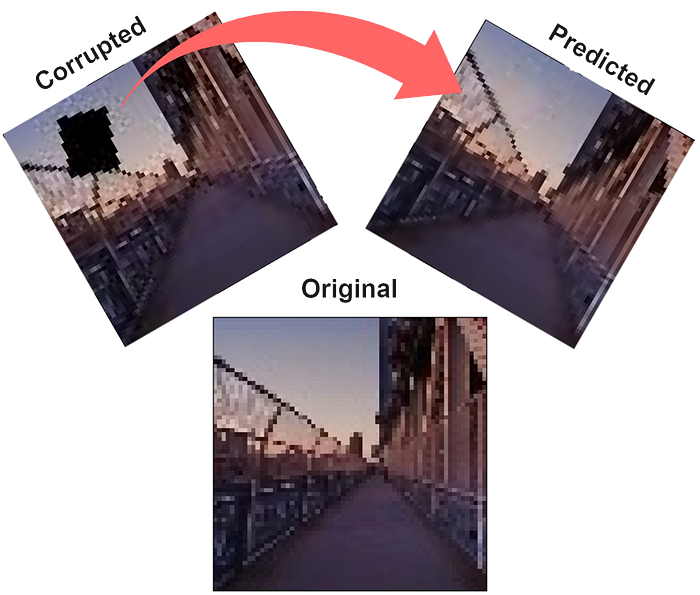

A recent study introduced generative promptable inpainting, a method for replacing or removing objects in images using a pre-trained NeRF model, masks on training images, and a text prompt.

The technique aims to achieve 3D and 4D visual content generation, text-prompt guided generation, and consistency with the existing background.

NeRF Editing utilizes Neural Radiance Fields for manipulating and editing 3D scenes and objects, enabling object removal, geometry transformations, and appearance editing.

This study focuses on generating new content consistent with the underlying NeRF, unlike existing works that focus on editing existing content.

Inpainting techniques in NeRF leverage generative models like GANs and Stable Diffusion to fill in missing pixels while maintaining fidelity to the 3D structure across viewpoints.

The framework involves pre-processing training images, fine-tuning NeRF for multiview consistency, and extending to 4D if necessary.

Techniques such as Score Distillation Sampling are used for multiview convergence in generating dynamic content from text.

This approach enables the manipulation of specific objects within a given background NeRF, ensuring consistency and allowing inpainting tasks to be extended to 4D while maintaining temporal consistency.

The research highlights the potential for inpainting techniques to enhance virtual and augmented reality applications, content development, and advancements in computer vision.

Summary based on 19 sources