Revolutionary 100B-Parameter Protein Language Model xTrimoPGLM-100B Released with Open Access Weights

April 3, 2025

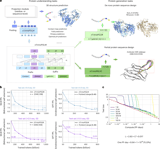

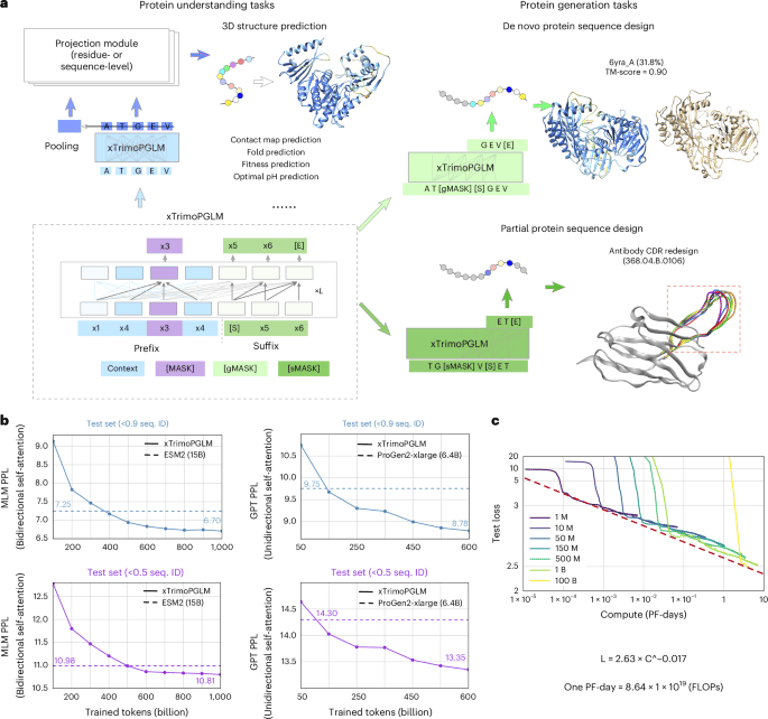

The xTrimoPGLM-100B is a groundbreaking 100-billion-parameter pretrained transformer model specifically designed for interpreting protein language.

To train this model, data was sourced from UniRef90 and ColabFoldDB, complemented by structure prediction datasets from AlphaFold and PDB.

The training process utilized DeepSpeed v0.6.1, while data analysis was conducted using Python along with libraries such as NumPy, SciPy, and Matplotlib.

This collaborative effort involved contributions from multiple institutions, including BioMap Research in Palo Alto, California, and Tsinghua University in Beijing, China.

The authors express gratitude to Zhipu AI for providing essential computing resources and acknowledge support from the National Science Fund for Distinguished Young Scholars.

For further research and experimentation, eighteen downstream task datasets are now publicly accessible.

Additionally, the trained weights for the xTrimoPGLM model are available on Hugging Face's platform, facilitating broader access to this innovative tool.

Summary based on 1 source