Arcee AI Unveils 399-Billion-Parameter Open-Source Model to Challenge Proprietary AI Giants

April 4, 2026

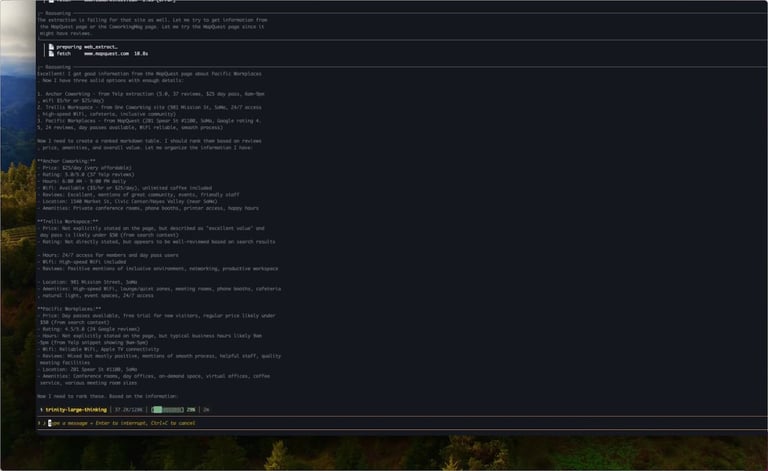

Arcee AI released Trinity-Large-Thinking, a 399-billion-parameter open-source reasoning model under Apache 2.0 on early April 2026, designed for enterprise self-hosting with no usage restrictions.

The model is licensed under Apache 2.0 to enable full customization and commercial use by individuals up to enterprises, positioning it as a flexible, sovereign infrastructure layer.

Arcee argues the shift toward proprietary open-weight offerings creates a strategic edge for Trinity as an American open-weight leader, aiming to serve long-horizon agentic workflows.

Benchmark data show Trinity performing competitively on agent-oriented tasks, with a favorable cost-per-token about $0.90 for output tokens.

Innovations include SMEBU and a 3:1 mix of local/global attention to sustain long-context performance and improve stability during training.

Future plans call for distilling frontier reasoning into smaller Mini and Nano models while expanding the open-weight ecosystem and auditable AI stacks for regulated industries.

Trinity’s training data totals 20 trillion tokens (10 trillion curated web data plus 10 trillion high-quality synthetic data), with a focus on excluding copyrighted material to reduce IP risk.

A small San Francisco startup built the frontier model in a 33-day, 2,048-GPU training run with a $20 million budget, completing part of a larger Series A totaling around $24 million.

The release signals a shift from chat-based systems to reasoning agents, introducing a dedicated thinking phase to enhance multi-step tool use and cross-turn coherence.

The move fills an open-source gap in the U.S. amid a shift toward proprietary approaches by other labs, with notes on competitors like Google’s Gemma 4 and Alibaba in the landscape.

Trinity uses extreme sparsity via Mixture-of-Experts, activating only about 1.56% of parameters per token to achieve 2–3x faster inference and lower costs on comparable hardware.

Industry actors in the U.S. push open licensing and frontier performance, signaling potential leadership for American startups in open-source AI.

Summary based on 2 sources

Get a daily email with more Startups stories

Sources

WinBuzzer • Apr 4, 2026

Arcee AI Launches 399B Top-Performing Open-Source Model at 96% Lower Cost